Packet analysis is invaluable in troubleshooting network issues and network monitoring. While packet analysis used to be used only in the domain of physical networks, that is no longer the case.

The vSphere Distributed Virtual Switch is now able to produce dumps of specific virtual network traffic and transport using ERSPAN to packet monitoring consoles. Yes, that’s right, using the Distributed Virtual Switch you can monitor network traffic in the virtual realm even if the traffic doesn’t actually hit the physical wire.

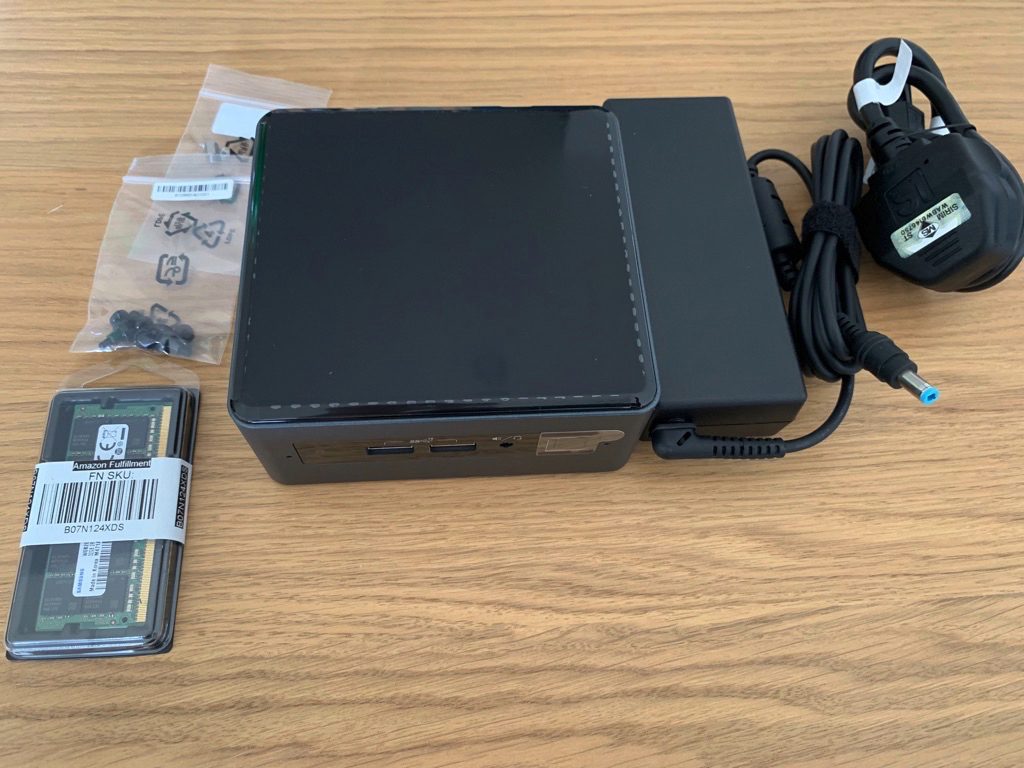

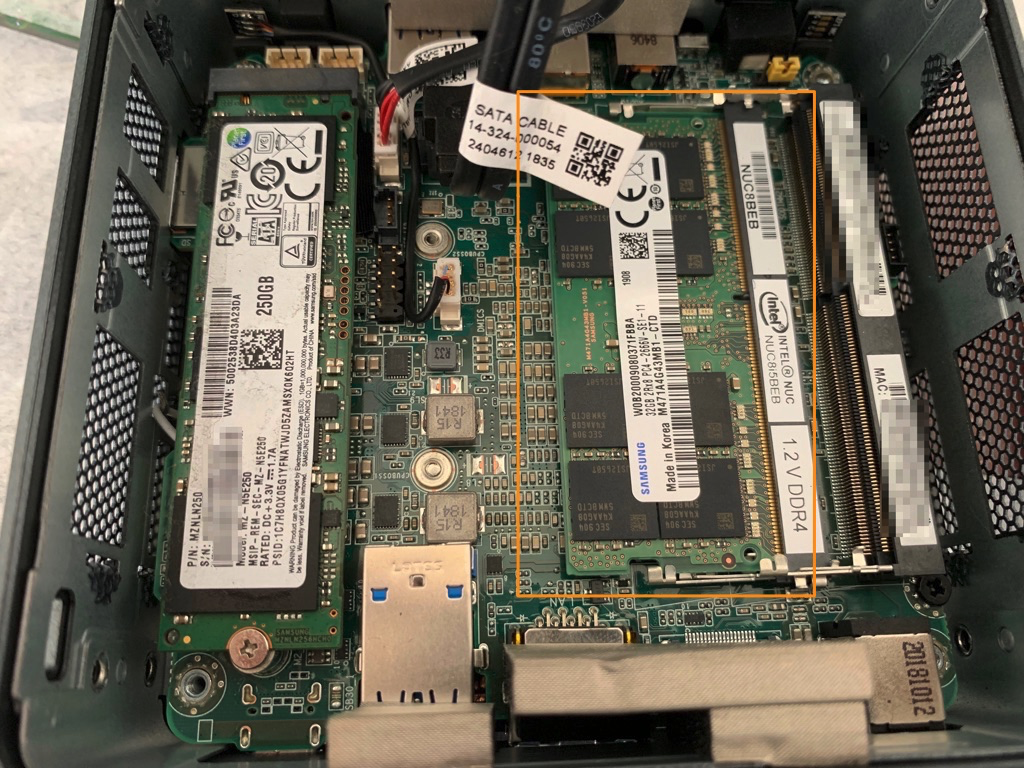

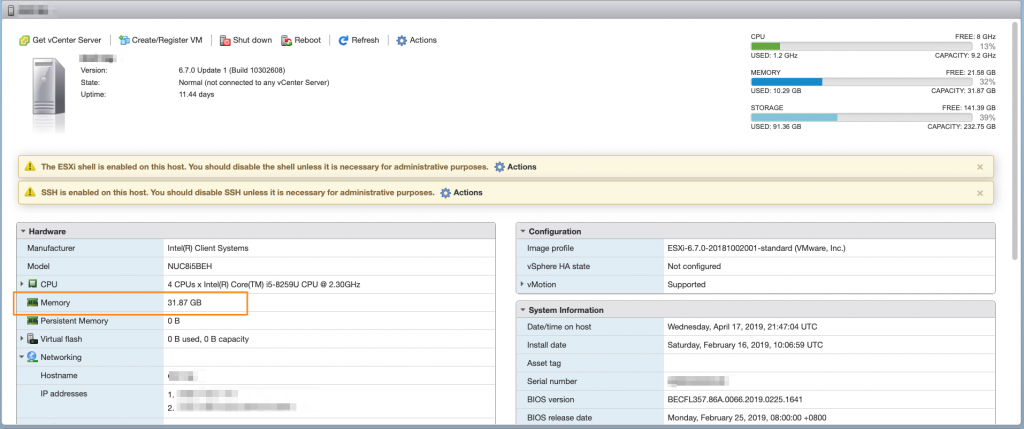

I didn’t quite see much material covering this so far, so I thought I’d show how this would work. For this blog post, I used the following:

- Distributed Virtual Switch (vSphere Enterprise Plus)

- Wireshark installed in a monitoring console (my personal laptop)

- A VM which we want to monitor (a Windows 7 VM which is my jump box VM)

Let’s start with setting up Wireshark for packet capturing on the monitoring console. Opening Wireshark, go to Capture -> Interfaces.

That should open up a list of interfaces which we can capture from. Now I’d like to capture using the “Local Area Connection”, though it’s probably a good idea to find out what the IP address for that interface is. We’ll need to set it as a receiver for ERSPAN captured traffic. Click on “Options”.

We look out again for the “Local Area Connection” and note the IP address associated with the chosen receiving interface. In the case, it’s 10.2.1.110. We’ll checkbox the interface, and then click on “Start”.

Just like that, Wireshark will start dumping out all the traffic it gets on the interface. In this case, we only want to monitor traffic capture via ERSPAN on the Distributed Virtual Switch. Since ERSPAN encapsulates traffic in GRE, that’s what we’ll filter for. Type in “gre” into the filter field and click on “apply”, which should immediately filter out all the “noise” packets.

Now Wireshark is set up correctly, let’s turn our attention to setting up ERSPAN. Select the Distributed Virtual Switch, which in my example is “dvSwitch”. Go to Settings->Port Mirroring. Click on “New”.

In the pop-up dialog, select “Encapsulated Remote Mirroring (L3) Source”. This enables us to remotely send dump out traffic to a monitoring console over IP. Click “Next”.

Give the session a name, and change it’s status to “Enabled” to activate ERSPAN port-monitoring. Click “Next”.

Add a network traffic source by clicking on the icon below. A source is the object which we’d like to capture network traffic from.

A list of ports will pop up. You can choose one more more VMs, or one or more VMKnics to monitor and capture from. In this case, let’s choose a Windows 7 desktop VM. Click “OK”.

You can see the the VM we’ve selected is now shown as one of the sources of traffic for packet capture. Click “Next”.

Now, we’ll need to tell the Distributed Virtual Switch where to send the captured traffic. Click on the “+” sign to add a destination.

This is where we type in the IP address of the monitoring console which has Wireshark enabled. If you remember, we noted back when we were setting up Wireshark that the IP address for the receiving interface was 10.2.1.110, so that’s what we type in and click “OK”.

That done, we’ll review the settings one last time, and make sure everything is in order. Click “Finish” when everything is good to go.

Jumping back to the Wireshark console, we should immediately see traffic being dumped over the network by the Distributed Virtual Switch and showing up on the screen. On the Windows 7 desktop, I used a browser to access this http://kacangisnuts.com/, and the result is quite a bit of captured HTTP traffic. Just for fun, I selected a HTTP GET request and followed its TCP communication stream to see what was being communicated.

The captured traffic stream is pieced together and shown below; it’s the HTTP conversation between the Windows 7 desktop’s browser and my blog server.

So there you have it, this is a quick blog entry I whipped up to show how to use ERSPAN for packet capture and analysis. This shows how, with the right tools, you can still use good ol’ conventional network analysis and troubleshooting methodologies to monitor and solve problems for VMs, just like you would for systems in the physical network world.